Being the energy conserving environmentalist I am (read – tightass) I look for ways to reduce unnecessary power consumption. I have quite a few devices that are plugged in 24x7, but get limited use. This includes my HTPC setup that comprises a Mac Mini i5 (bootcamp Windows 8) and a Synology DS413j NAS. In the previous blog post I outlined costs involved in running the Synology DS413j. The DS413j does not support system hibernation, only disk hibernation. From my testing, it pulls about 11w continuous when the hard disk was hibernated. In the grand scheme of things, this is not a big deal.

So what can be done to reduce power consumption? Synology supports scheduled power-on / power-off events through their DSM. There is also the very useful Advanced Power Manager package that takes this a step further by preventing the shutdown whilst any detected network/disk activity is above a specific threshold. This way, if you are a watching a movie and midway reach the scheduled power-off time, the NAS won’t shutdown until such time disk/network activity falls below the configured threshold.

In the screenshot above, I have configured Power Off times for every day of the week, but not necessarily power on times. This means the NAS will always shutdown at night if running, but not necessarily start again in the morning automatically.

Whilst the above package is great, I wanted to go a step further and support remote shutdown of the NAS triggered by a desktop shortcut and/or remote control event. In my HTPC setup, I’m using EventGhost to coordinate infrared remote control events to specific actions. I leverage the On Screen Menu EventGhost plugin capability to display a menu of options rendered on my (television) screen for interacting with the HTPC. This includes launching XBMC, Suspending and Shutting down the Mac Mini, Sending a Wake On Lan packet to the Synology. I want to add a new option to this menu to shutdown the NAS.

One would think remote shutdown is pretty simple. In fact it can be very simple, by simply Enabling SSH on the NAS, and then leveraging something like putty to make a “root” user connection to the NAS supplying a “Remote command” option like the following

You would simply save the putty session as some specific name (e.g root-shutdown), then trigger it using a shortcut link such "C:\Program Files (x86)\PuTTY\PUTTY.EXE" -load "root-shutdown"

But what if you want to grant the ability to power off the NAS to some non-root user? Would it not be great to have some user, say the fictitious user “netshutdown”, who simply by connecting to the NAS through telnet / SSH would result in the NAS shutting down?

This would be easily accomplished using something like sudo whereby administration commands can easily be delegated. However “sudo” is not available in the standard DSM 4.2 install on the DS413j. Simple then, lets leverage suid on the poweroff executable so that it runs as root. However, the poweroff executable is actually a symbolic link to /bin/busybox. Setting suid on busybox also makes no difference:

Setting suid on busybox does however allow “su” to function from a non-root user.

I’m not comfortable setting suid on the busybox executable given pretty much every command in /bin and a number from /sbin are linked to it. One extreme word of caution!!! Do not make the mistake of executing chmod a-x on the busybox executable or anything linked to it! You will hose your system. I was extremely lucky to have perl installed on my NAS, and had not logged out from my root session, and was able to leverage perl’s chmod function to restore pemissions! (what a relief)

If you search for busybox, poweroff, and suid, you find a number of results that talk about employing techniques such as /etc/busybox.conf to call out specific applets and whom can run them, creating c wrapper programs that leverage execve to call busybox, or setuid and system to call /sbin/poweroff etc. I tried all of these and none of them worked with the compiled busybox executable on my NAS; I would receive the Permission denied / Operation not permitted errors.

Finally however, I cracked it.

I created a wrapper program that rather than call /sbin/poweroff, calls a shell script owned by root, which in turn triggers poweroff.

The program (shutdown.c) is as follows:

#include <stdio.h> /* printf function */

#include <stdlib.h> /* system function */

#include <sys/types.h> /* uid_t type used by setuid */

#include <unistd.h> /* setuid function */

int main()

{

printf("Invoking /bin/shutdown.sh ...\n");

setuid(0);

system("/bin/shutdown.sh" );

return 0;

}

/bin/shutdown.sh is as follows:

echo Triggering poweroff command

/sbin/poweroff

I followed the developer guide to determine how to compile c programs specific for the NAS. In the case of the DS413j, It leverages a Marvell Kirkwood mv6282 ARMv5te CPU. So I needed to leverage the toolchain for Marvell 88F628x.

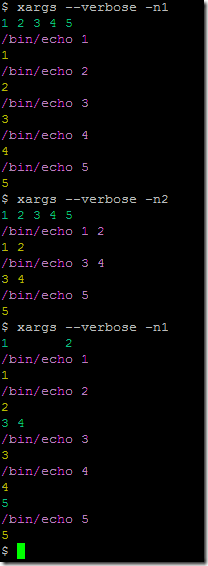

As I had no native/physical linux machine available for the compilation, I decided to use VirtualBox on Windows and download / leverage the Lubuntu 12.10 VirtualBox image which is a lightweight version of Ubuntu. I set the network card to bridged, started the image, authenticated as lubuntu/lubuntu and updated/added some core packages:

sudo apt-get update

sudo apt-get install build-essential dkms gcc make

sudo apt-get install linux-headers-$(uname -r)

sudo -s

cd /tmp

wget http://sourceforge.net/projects/dsgpl/files/DSM%204.1%20Tool%20Chains/Marvell%2088F628x%20Linux%202.6.32/gcc421_glibc25_88f6281-GPL.tgz

tar zxpf gcc421_glibc25_88f6281-GPL.tgz -C /usr/local/

cat > /tmp/shutdown.c <<EOF

#include <stdio.h> /* printf function */

#include <stdlib.h> /* system function */

#include <sys/types.h> /* uid_t type used by setuid */

#include <unistd.h> /* setuid function */

int main()

{

printf("Invoking /bin/shutdown.sh ...\n");

setuid(0);

system("/bin/shutdown.sh" );

return 0;

}

EOF

/usr/local/arm-none-linux-gnueabi/bin/arm-none-linux-gnueabi-gcc shutdown.c -o shutdown

lubuntu@lubuntu-VirtualBox:/tmp$ /usr/local/arm-none-linux-gnueabi/bin/arm-none-linux-gnueabi-gcc shutdown.c -o shutdown

lubuntu@lubuntu-VirtualBox:/tmp$ ls -ltr

total 16

-rw-rw-r-- 1 lubuntu lubuntu 308 Aug 22 01:29 shutdown.c

-rwxrwxr-x 1 lubuntu lubuntu 6715 Aug 22 01:29 shutdown

lubuntu@lubuntu-VirtualBox:/tmp$

Now that the shutdown executable was created, I uploaded it to the NAS to the /bin directory.

Next I needed the user/group account infrastructure in place on the NAS in order to trigger it. I created a user named “netshutdown” and a group named “shutdown” using the DiskStation Web UI Control Panel User/Group widgets. I also made sure the SSH service was enabled (Control Panel > (Network Services >) Terminal > Enable SSH Service).

If you try and SSH leveraging username/password authentication to the NAS as the newly created user, you will see that you are not presented with a shell. This is because Synology locks the user down, which can be seen by viewing the passwd file connected as root:

media> cat /etc/passwd

root:x:0:0:root:/root:/bin/ash

lp:x:4:7:lp:/var/spool/lpd:/sbin/nologin

ftp:x:21:21:Anonymous FTP User:/nonexist:/sbin/nologin

anonymous:x:21:21:Anonymous FTP User:/nonexist:/sbin/nologin

smmsp:x:25:25:Sendmail Submission User:/var/spool/clientmqueue:/sbin/nologin

postfix:x:125:125:Postfix User:/nonexist:/sbin/nologin

dovecot:x:143:143:Dovecot User:/nonexist:/sbin/nologin

spamfilter:x:783:1023:Spamassassin User:/var/spool/postfix:/sbin/nologin

nobody:x:1023:1023:nobody:/home:/sbin/nologin

admin:x:1024:100:System default user:/var/services/homes/admin:/bin/sh

guest:x:1025:100:Guest:/nonexist:/bin/sh

mshannon:x:1026:100::/var/services/homes/mshannon:/sbin/nologin

netshutdown:x:1027:100::/var/services/homes/netshutdown:/sbin/nologin

Notice the netshutdown user has the shell set as “/sbin/nologin”, and the home directory set to “/var/services/homes/netshudown”. There is no such “homes” directory on my instance.

I edited /etc/passwd and changed

netshutdown:x:1027:100::/var/services/homes/netshutdown:/sbin/nologin

to

netshutdown:x:1027:65536::/home/netshutdown:/bin/sh

65536 is the group id of the new shutdown group:

media> cat /etc/group

#$_@GID__INDEX@_$65536$

root:x:0:

lp:x:7:lp

ftp:x:21:ftp

smmsp:x:25:admin,smmsp

users:x:100:

administrators:x:101:admin

postfix:x:125:postfix

maildrop:x:126:

dovecot:x:143:dovecot

nobody:x:1023:

shutdown:x:65536:netshutdown

I then created the home directory for the user:

mkdir -p /home/netshutdown

chown netshutdown /home/netshutdown

Test it out …

media> su - netshutdown

BusyBox v1.16.1 (2013-04-16 20:15:54 CST) built-in shell (ash)

Enter 'help' for a list of built-in commands.

media> pwd

/home/netshutdown

My user/group was now in place, so it was time to set permissions and configure the shutdown executable and shell script :-

cd /bin

chown root.shutdown shutdown

chmod 4750 shutdown

media> ls -la shutdown*

-rwsr-x--- 1 root shutdown 6715 Aug 22 09:31 shutdown

cat > /bin/shutdown.sh <<EOF

echo Triggering poweroff command

/sbin/poweroff

EOF

media> ls -la shutdown.sh

-rw-r--r-- 1 root root 48 Aug 22 09:35 shutdown.sh

chmod 700 shutdown.sh

media> ls -la shutdown*

-rwsr-x--- 1 root shutdown 6715 Aug 22 09:31 shutdown

-rwx------ 1 root root 48 Aug 22 09:35 shutdown.sh

For the netshutdown user to automatically trigger the shutdown executable on connection, I had a few different options:

Option 1) Change the user’s login shell from /bin/sh to be /bin/shutdown

Option 2) Create a .profile file for the user, and trigger the /bin/shutdown command from the user’s .profile

I decided on the latter.

echo "/bin/shutdown" > /home/netshutdown/.profile

chown netshutdown /home/netshutdown/.profile

Now it was time to test the power off …

At this stage, we can now easily create a new putty session that connects via SSH as user netshutdown with username/password authentication. We can invoke that saved session using a shortcut such as PUTTY.EXE -load "XXX Sesion Name”.

If you are a sucker for punishment, you can make this thing a bit more complex by utilizing public key authentication (rather than username/password). In the most simple form, you leverage a tool such as puttygen.exe to generate a keypair (private key and public certificate) for a particular user. You enable public key authentication on your NAS, and upload the public certificate of the user to the NAS. You then configure putty to authenticate using public key authentication and point it to the location of your private key. You can go a step further by encrypting your private key so that a passphrase must be supplied on login in order to extract the private key and authenticate. You can also utilize the Putty Authentication Agent (Pageant) to store the decrypted private key in memory, and have putty sessions consult Pageant for the private key on authentication. This blog post does a good job of describing the options.

To get public key authentication up and running quickly on your NAS follow steps similar to the following :

1) Run puttygen.exe

2) Generate new SSH-2 RSA Key without a keyphrase

3) Save public key and private key to files (e.g publickey.txt and privatekey.ppk)

4) (This should have already been done) From Synology DiskStation UI, Go to Control Panel > (Network Services >) Terminal > Enable SSH Service

5) Next SSH using putty.exe to the NAS as the root user

6) Edit /etc/ssh/sshd_config

uncomment the following two lines

#PubkeyAuthentication yes

#AuthorizedKeysFile .ssh/authorized_keys

and save the file

7) Connect as the end-user concerned, and create ~/.ssh directory, and create the file ~/.ssh.ssh/authorized_keys

8) Add the public key text from above in to the authorized_keys file and save it ...

ssh-rsa AAAAB3Nza......== ....

9) Change the permissions

chmod 700 ~/.ssh

chmod 644 ~/.ssh/authorized_keys

10) Open Putty and make the following changes to the session ...

Connection type: SSH

Connection->Data->Auto-login username: netshutdown

Connection->SSH->Auth->Private Key: Your Private Keyfile from above

11) Save the session - e.g. netshutdown-media-nas

12) Create a new shortcut on your desktop with the following target "C:\Program Files\PuTTY\putty.exe" -load "netshutdown-media-nas"

Notes - The private key above contains no passphrase, and is essentially equivalent to having a password in clear text stored in a text file on the desktop. Where security is required, configure the private key with a passphrase. You can then run/utilize Pageant to store effectively the unencrypted private key in memory by supplying the encryption passphrase; this then prevents the continuous password prompting on establishing each new session.